Hadoop搭建(完全分布式)

温馨提示:这篇文章已超过397天没有更新,请注意相关的内容是否还可用!

节点分布:

| bigdata-master | bigdata-slave1 | bigdata-salve2 |

| NameNode | NodeManager | NodeManager |

| SecondaryNameNode | DataNode | DataNode |

| ResourceManager | ||

| NodeManager | ||

| DataNode |

目录

一、jdk安装:

二、hadoop安装

一、jdk安装:

jdk-8u212链接:https://pan.baidu.com/s/1avN5VPdswFlMZQNeXReAHg

提取码:50w6

1.解压

[root@bigdata-master software]# tar -zxvf jdk-8u212-linux-x64.tar.gz -C /opt/module/

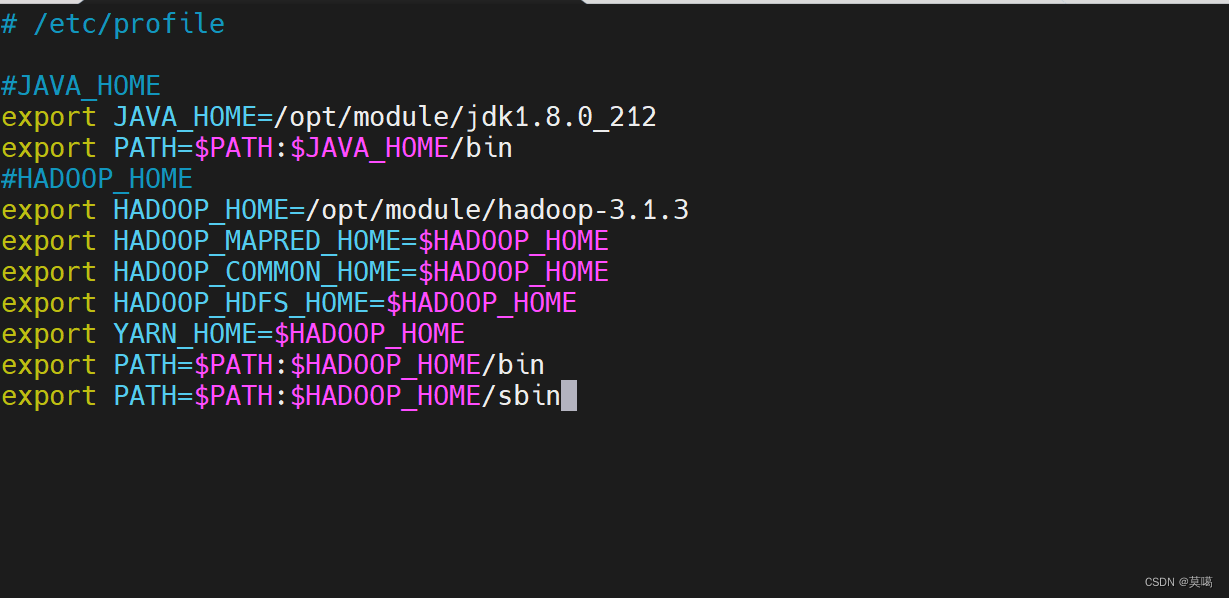

2.环境变量

vim /etc/profile

添加如下配置 ``` export JAVA_HOME=/opt/module/jdk1.8.0_212 export PATH=$PATH:$JAVA_HOME/bin ```

:wq保存退出

使配置生效

source /etc/profile

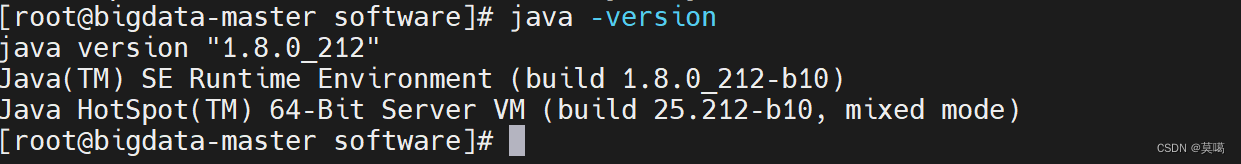

3.查看版本

java -version

4.免密登录(三台都执行)一定要弄的

ssh-keygen -t rsa

其中会让输入密码等操作,直接不输入,按enter键

会在/root/.ssh产生id_rsa和id_rsa.pub文件

cd /root/.ssh

cat id_rsa.pub >>authorized_keys

将其他节点的id_rsa.pub内容添加到本节点的authorized_keys文件中(每个节点需要执行)

二、hadoop安装

hadoop-3.1.3链接:https://pan.baidu.com/s/11yFkirCiT6tdo_9i1jWwkw

提取码:stgv

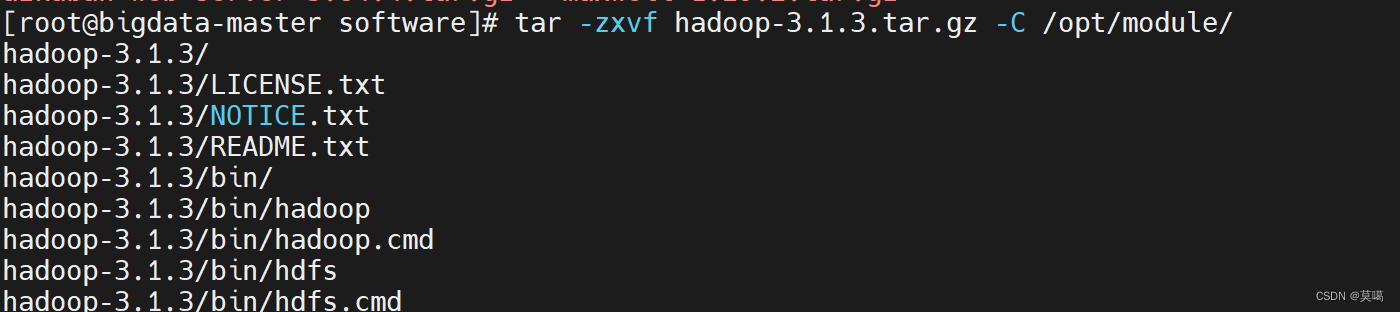

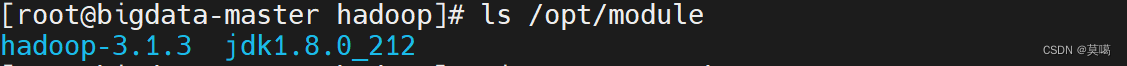

1.解压

tar -zxvf hadoop-3.1.3.tar.gz -C /opt/module/

2.配置文件

cd /opt/module/hadoop-3.1.3/etc/hadoop/

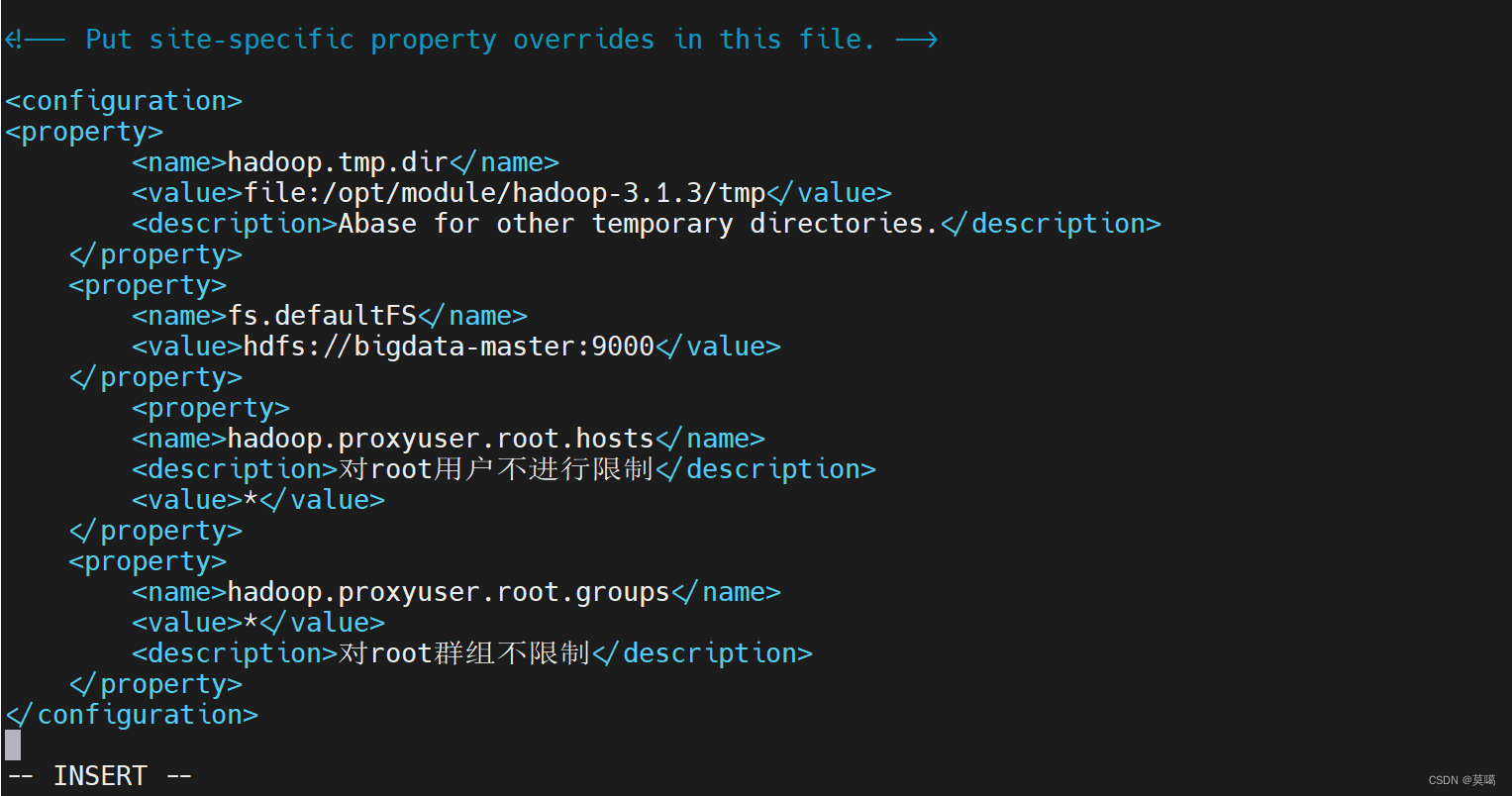

(1). core-site.xml

vim core-site.xml

hadoop.tmp.dir

file:/opt/module/hadoop-3.1.3/tmp

Abase for other temporary directories.

fs.defaultFS

hdfs://bigdata-master:9000

hadoop.proxyuser.root.hosts

对root用户不进行限制

*

hadoop.proxyuser.root.groups

*

对root群组不限制

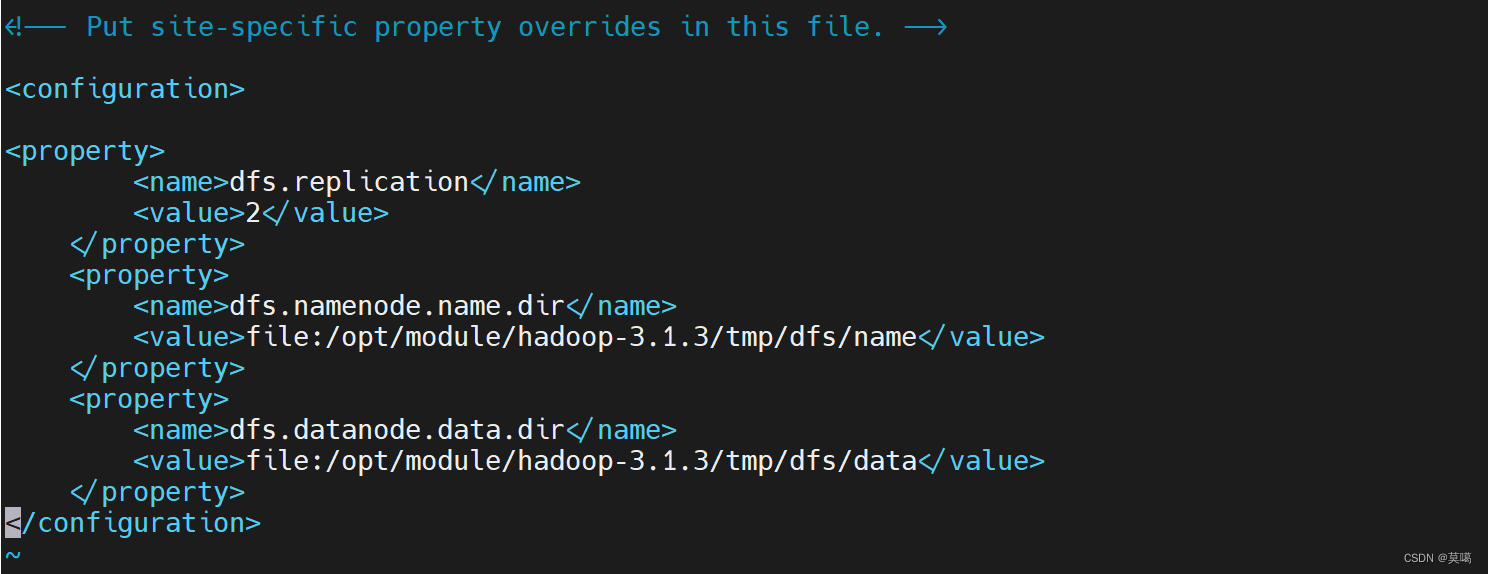

(2). hdfs-site.xml

vim hdfs-site.xml

dfs.replication

2

dfs.namenode.name.dir

file:/opt/module/hadoop-3.1.3/tmp/dfs/name

dfs.datanode.data.dir

file:/opt/module/hadoop-3.1.3/tmp/dfs/data

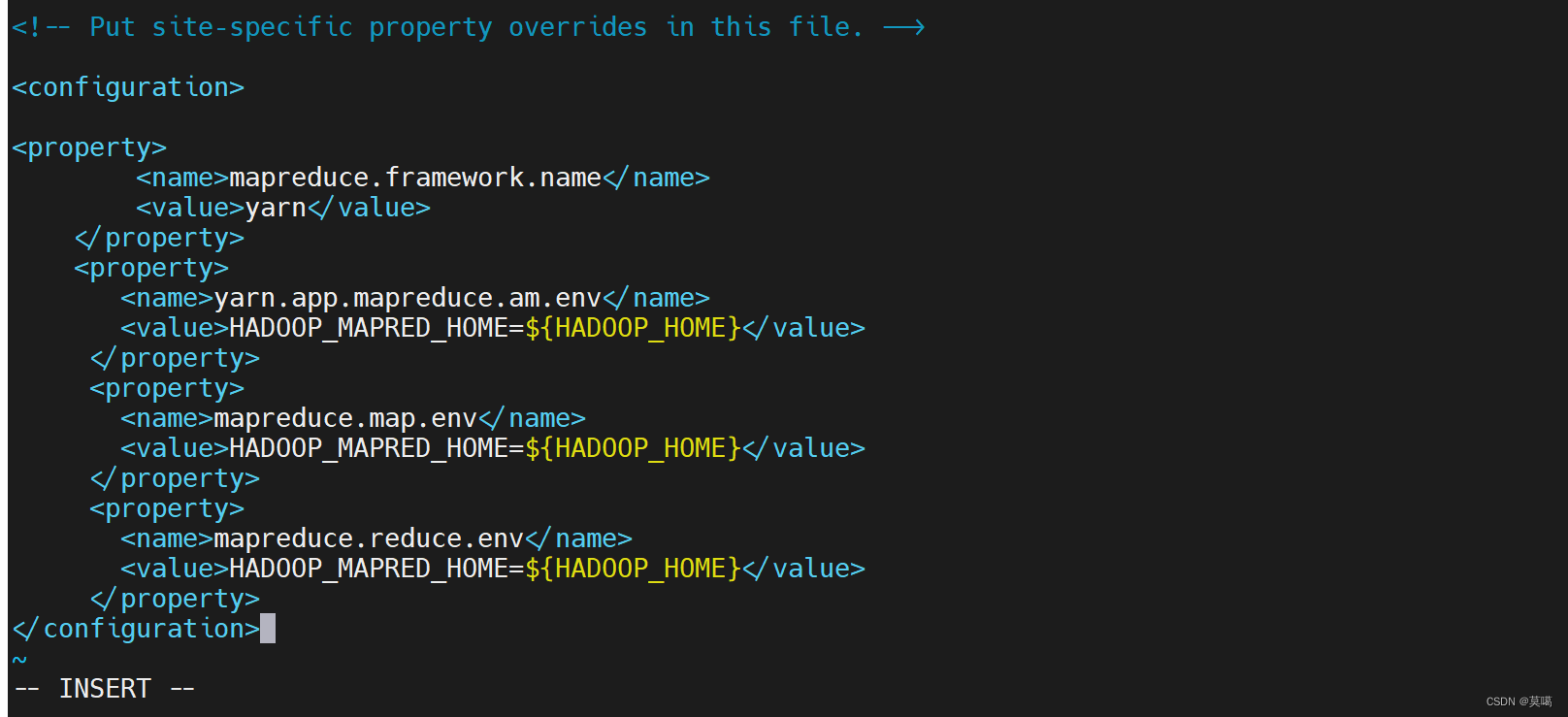

(3). mapred-site.xml

vim mapred-site.xml

mapreduce.framework.name

yarn

yarn.app.mapreduce.am.env

HADOOP_MAPRED_HOME=${HADOOP_HOME}

mapreduce.map.env

HADOOP_MAPRED_HOME=${HADOOP_HOME}

mapreduce.reduce.env

HADOOP_MAPRED_HOME=${HADOOP_HOME}

保存退出(:wq)

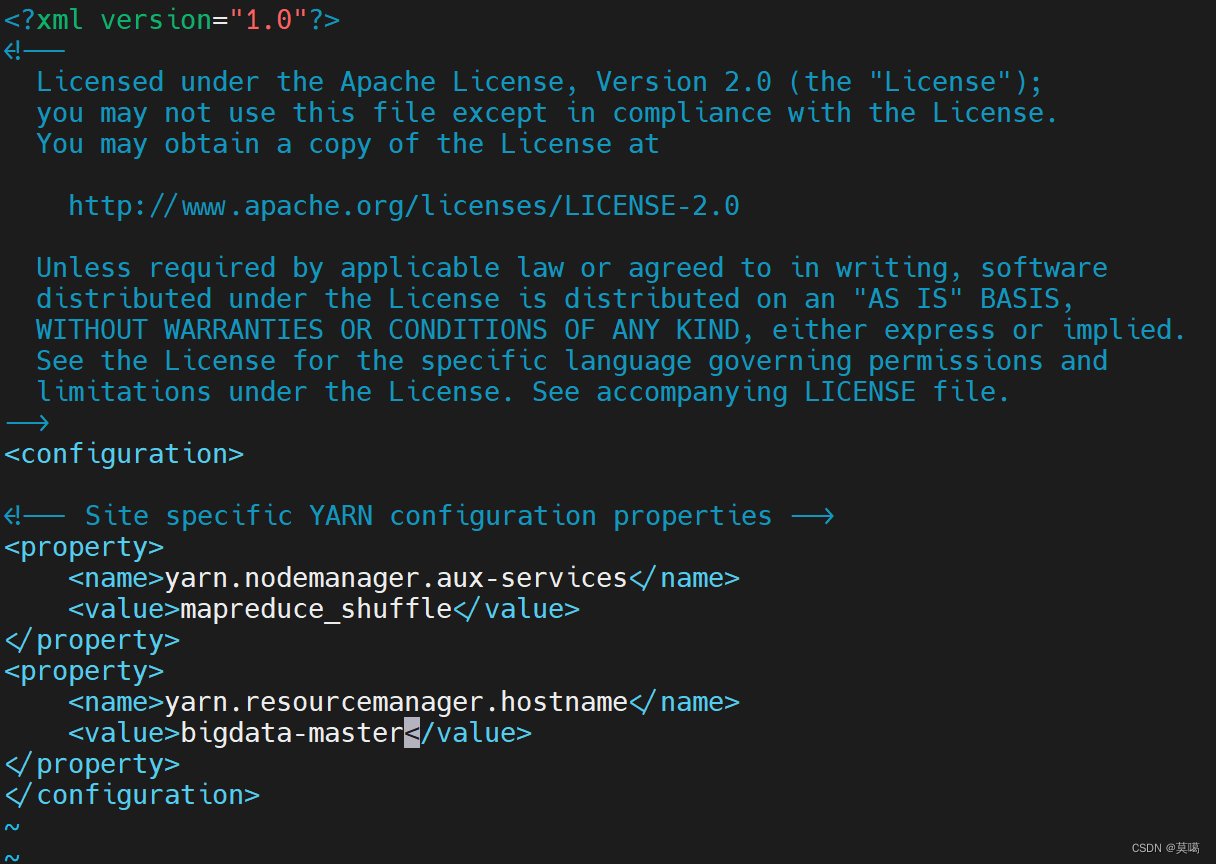

(4). yarn-site.xml

vim yarn-site.xml

yarn.nodemanager.aux-services

mapreduce_shuffle

yarn.resourcemanager.hostname

bigdata-master

保存退出(:wq)

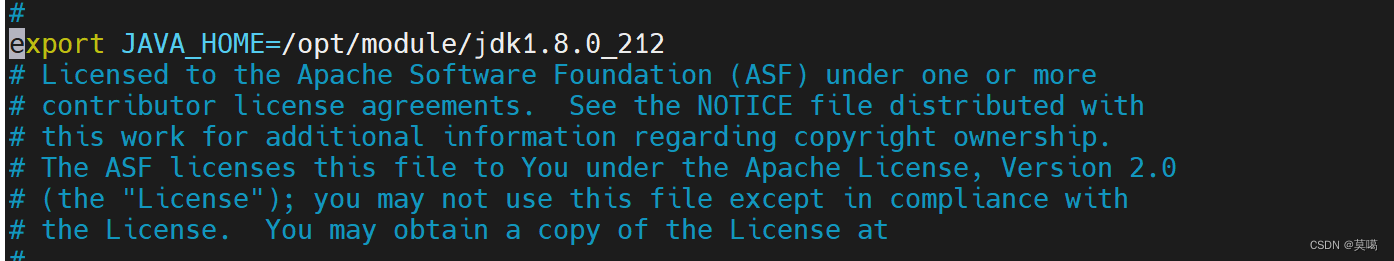

(5).yarn-env,sh

vim yarn-env.sh

export JAVA_HOME=/opt/module/jdk1.8.0_212

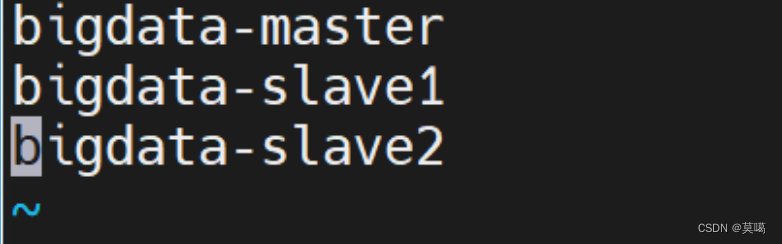

(6).workers

vim workers

bigdata-master bigdata-slave1 bigdata-slave2

(7).

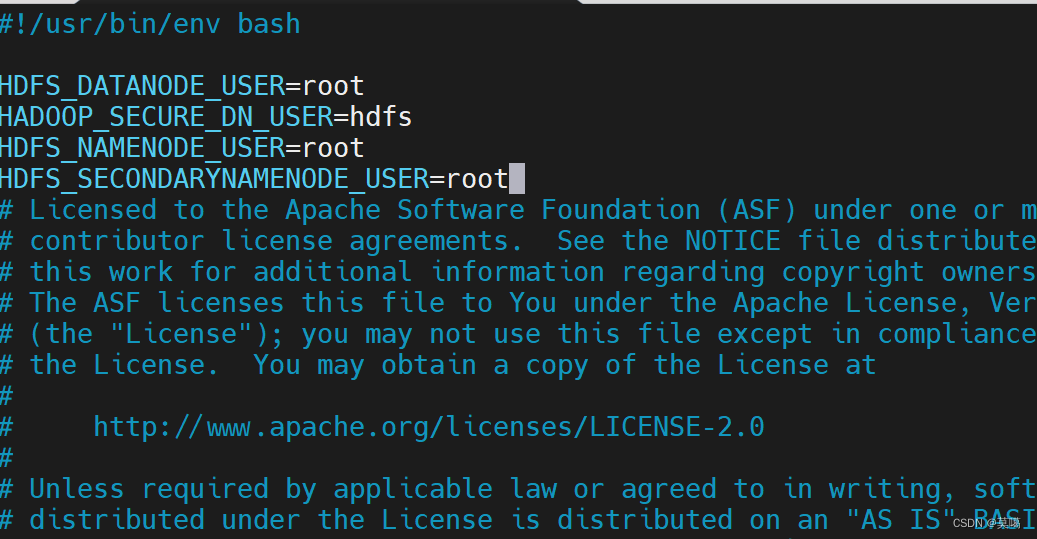

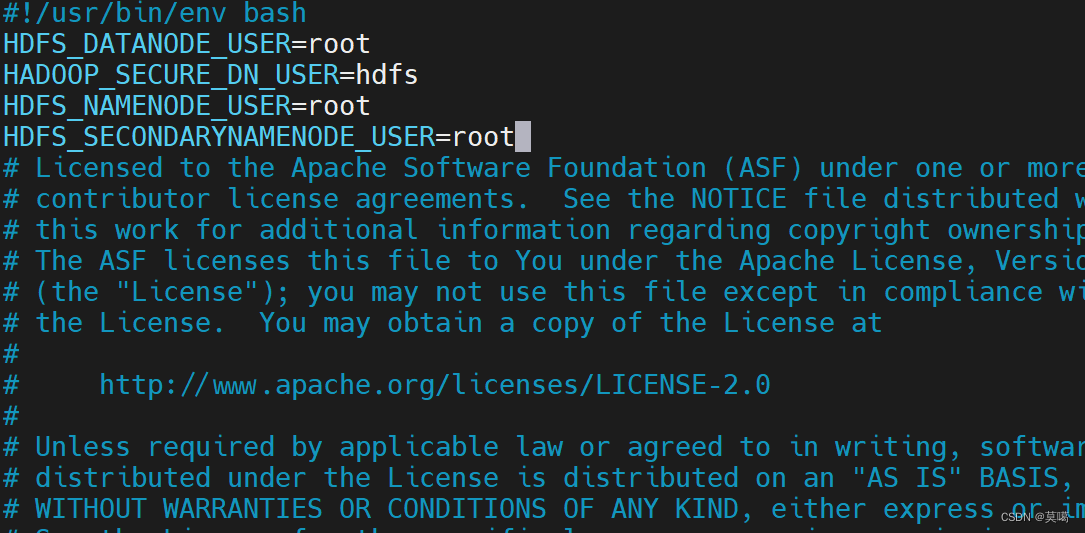

修改 /opt/module/hadoop-3.1.3/sbin/start-dfs.sh和 /opt/module/hadoop-3.1.3/sbin/stop-dfs.sh

vim /opt/module/hadoop-3.1.3/sbin/start-dfs.sh

HDFS_DATANODE_USER=root HADOOP_SECURE_DN_USER=hdfs HDFS_NAMENODE_USER=root HDFS_SECONDARYNAMENODE_USER=root

/opt/module/hadoop-3.1.3/sbin/stop-dfs.sh

HDFS_DATANODE_USER=root HADOOP_SECURE_DN_USER=hdfs HDFS_NAMENODE_USER=root HDFS_SECONDARYNAMENODE_USER=root

(8).

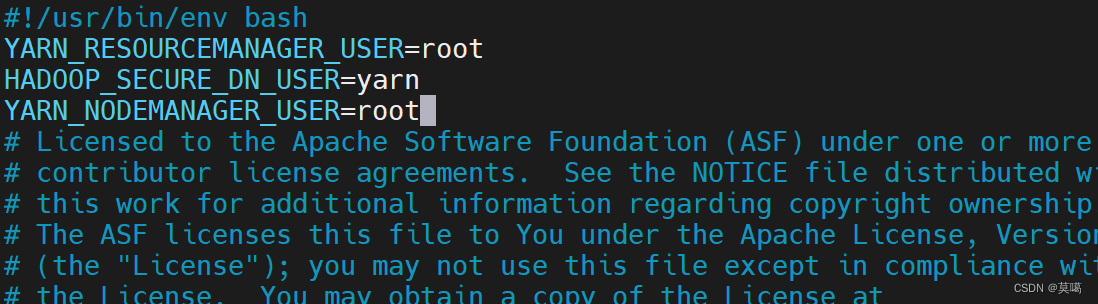

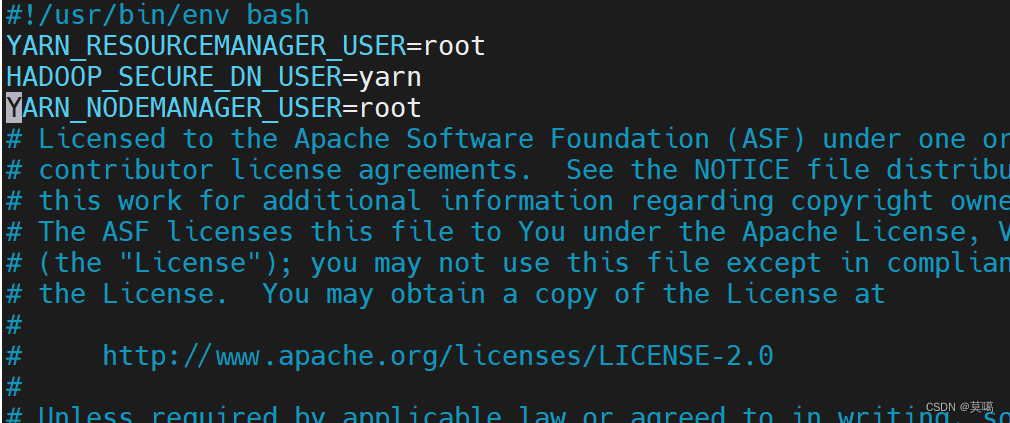

修改 /opt/module/hadoop-3.1.3/sbin/start-yarn.sh和 /opt/module/hadoop-3.1.3/sbin/stop-yarn.sh

vim /opt/module/hadoop-3.1.3/sbin/start-yarn.sh

YARN_RESOURCEMANAGER_USER=root HADOOP_SECURE_DN_USER=yarn YARN_NODEMANAGER_USER=root

vim /opt/module/hadoop-3.1.3/sbin/stop-yarn.sh

YARN_RESOURCEMANAGER_USER=root HADOOP_SECURE_DN_USER=yarn YARN_NODEMANAGER_USER=root

3.环境变量

vim /etc/profile

#HADOOP_HOME export HADOOP_HOME=/opt/module/hadoop-3.1.3 export HADOOP_MAPRED_HOME=$HADOOP_HOME export HADOOP_COMMON_HOME=$HADOOP_HOME export HADOOP_HDFS_HOME=$HADOOP_HOME export YARN_HOME=$HADOOP_HOME export PATH=$PATH:$HADOOP_HOME/bin export PATH=$PATH:$HADOOP_HOME/sbin

使变量生效

source /etc/profile

4.分发(或者自己手配以上步骤给另外两台)

分发hadoop和jdk

[root@bigdata-master hadoop]# scp -r /opt/module/ root@bigdata-slave1:/opt/module [root@bigdata-master hadoop]# scp -r /opt/module/ root@bigdata-slave2:/opt/module

配置另外两台的环境变量 并使变量生效

source /etc/profile

5.Hdfs格式化(bigdata-master)

不要多次格式化

hdfs namenode -format

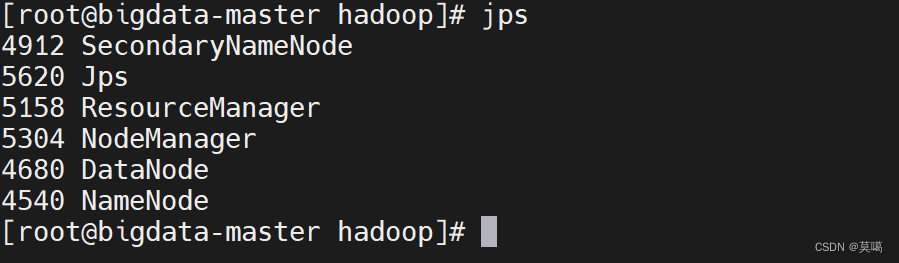

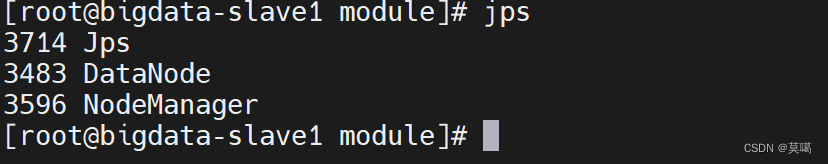

6.启动hadoop

start-all.sh

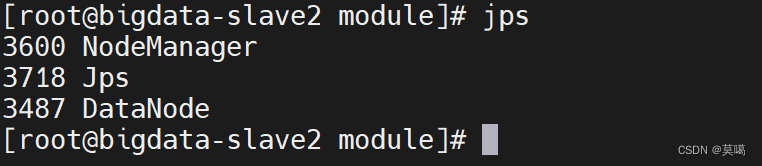

jps查看进程: