YOLOv10改进 | 损失函数篇 | SlideLoss、FocalLoss、VFLoss分类损失函数助力细节涨点(全网最全)

一、本文介绍

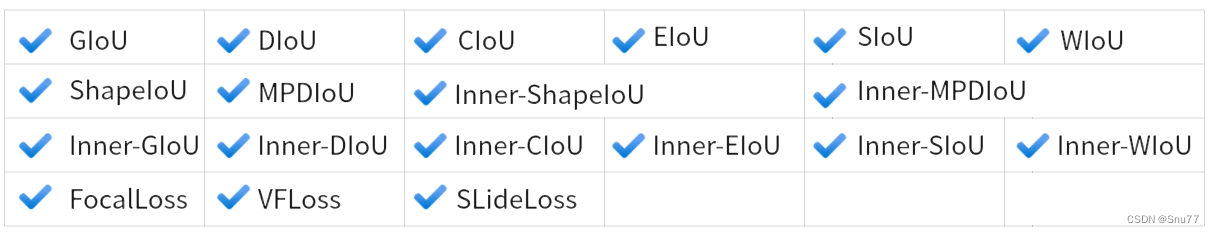

本文给大家带来的是分类损失 SlideLoss、VFLoss、FocalLoss损失函数,我们之前看那的那些IoU都是边界框回归损失,和本文的修改内容并不冲突,所以大家可以知道损失函数分为两种一种是分类损失另一种是边界框回归损失,上一篇文章里面我们总结了过去百分之九十的边界框回归损失的使用方法,本文我们就来介绍几种市面上流行的和最新的分类损失函数,同时在开始讲解之前推荐一下我的专栏,本专栏的内容支持(分类、检测、分割、追踪、关键点检测),专栏目前为限时折扣,欢迎大家订阅本专栏,本专栏每周更新3-5篇最新机制,更有包含我所有改进的文件和交流群提供给大家,本文支持的损失函数共有如下图片所示

欢迎大家订阅我的专栏一起学习YOLO!

专栏回顾:YOLOv10改进系列专栏——本专栏持续复习各种顶会内容——科研必备

目录

一、本文介绍

二、原理介绍

三、核心代码

三、使用方式

3.1 修改一

3.2 修改二

3.3 使用方法

四 、本文总

二、原理介绍

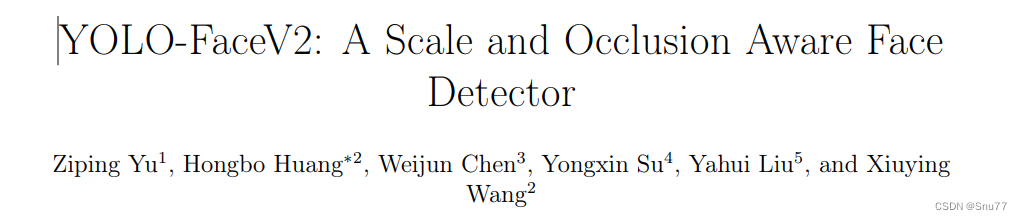

其中绝大多数损失在前面我们都讲过了本文主要讲一下SlidLoss的原理,SlideLoss的损失首先是由YOLO-FaceV2提出来的。

官方论文地址: 官方论文地址点击即可跳转

官方代码地址: 官方代码地址点击即可跳转

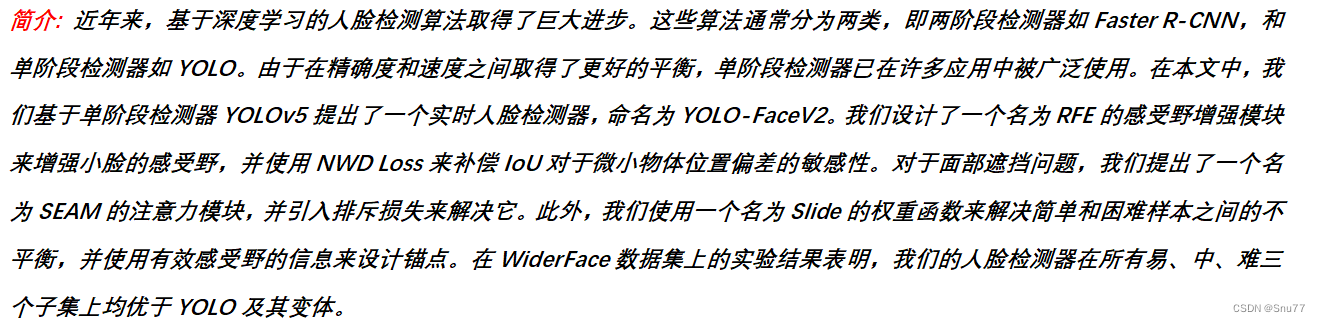

从摘要上我们可以看出SLideLoss的出现是通过权重函数来解决简单和困难样本之间的不平衡问题题,什么是简单样本和困难样本?

样本不平衡问题是一个常见的问题,尤其是在分类和目标检测任务中。它通常指的是训练数据集中不同类别的样本数量差异很大。对于人脸检测这样的任务来说,简单样本和困难样本之间的不平衡问题可以具体描述如下:

简单样本:

- 容易被模型正确识别的样本。

- 通常出现在数据集中的数量较多。

- 特征明显,分类或检测边界清晰。

- 在训练中,这些样本会给出较低的损失值,因为模型可以轻易地正确预测它们。

困难样本:

- 模型难以正确识别的样本。

- 在数据集中相对较少,但对模型性能的提升至关重要。

- 可能由于多种原因变得难以识别,如遮挡、变形、模糊、光照变化、小尺寸或者与背景的低对比度。

- 在训练中,这些样本会产生较高的损失值,因为模型很难对它们给出准确的预测。

解决样本不平衡的问题是提高模型泛化能力的关键。如果模型大部分只见过简单样本,它可能在实际应用中遇到困难样本时性能下降。因此采用各种策略来解决这个问题,例如重采样(对困难样本进行过采样或对简单样本进行欠采样)、修改损失函数(给困难样本更高的权重),或者是设计新的模型结构来专门关注困难样本。在YOLO-FaceV2中,作者通过Slide Loss这样的权重函数来让模型在训练过程中更关注那些困难样本(这也是本文的修改内容)。

三、核心代码

使用方式看章节四

import math class SlideLoss(nn.Module): def __init__(self, loss_fcn): super(SlideLoss, self).__init__() self.loss_fcn = loss_fcn self.reduction = loss_fcn.reduction self.loss_fcn.reduction = 'none' # required to apply SL to each element def forward(self, pred, true, auto_iou=0.5): loss = self.loss_fcn(pred, true) if auto_iou = auto_iou a3 = torch.exp(-(true - 1.0)) modulating_weight = a1 * b1 + a2 * b2 + a3 * b3 loss *= modulating_weight if self.reduction == 'mean': return loss.mean() elif self.reduction == 'sum': return loss.sum() else: # 'none' return loss class Focal_Loss(nn.Module): # Wraps focal loss around existing loss_fcn(), i.e. criteria = FocalLoss(nn.BCEWithLogitsLoss(), gamma=1.5) def __init__(self, loss_fcn, gamma=1.5, alpha=0.25): super().__init__() self.loss_fcn = loss_fcn # must be nn.BCEWithLogitsLoss() self.gamma = gamma self.alpha = alpha self.reduction = loss_fcn.reduction self.loss_fcn.reduction = 'none' # required to apply FL to each element def forward(self, pred, true): loss = self.loss_fcn(pred, true) # p_t = torch.exp(-loss) # loss *= self.alpha * (1.000001 - p_t) ** self.gamma # non-zero power for gradient stability # TF implementation https://github.com/tensorflow/addons/blob/v0.7.1/tensorflow_addons/losses/focal_loss.py pred_prob = torch.sigmoid(pred) # prob from logits p_t = true * pred_prob + (1 - true) * (1 - pred_prob) alpha_factor = true * self.alpha + (1 - true) * (1 - self.alpha) modulating_factor = (1.0 - p_t) ** self.gamma loss *= alpha_factor * modulating_factor if self.reduction == 'mean': return loss.mean() elif self.reduction == 'sum': return loss.sum() else: # 'none' return loss def reduce_loss(loss, reduction): """Reduce loss as specified. Args: loss (Tensor): Elementwise loss tensor. reduction (str): Options are "none", "mean" and "sum". Return: Tensor: Reduced loss tensor. """ reduction_enum = F._Reduction.get_enum(reduction) # none: 0, elementwise_mean:1, sum: 2 if reduction_enum == 0: return loss elif reduction_enum == 1: return loss.mean() elif reduction_enum == 2: return loss.sum() def weight_reduce_loss(loss, weight=None, reduction='mean', avg_factor=None): """Apply element-wise weight and reduce loss. Args: loss (Tensor): Element-wise loss. weight (Tensor): Element-wise weights. reduction (str): Same as built-in losses of PyTorch. avg_factor (float): Avarage factor when computing the mean of losses. Returns: Tensor: Processed loss values. """ # if weight is specified, apply element-wise weight if weight is not None: loss = loss * weight # if avg_factor is not specified, just reduce the loss if avg_factor is None: loss = reduce_loss(loss, reduction) else: # if reduction is mean, then average the loss by avg_factor if reduction == 'mean': loss = loss.sum() / avg_factor # if reduction is 'none', then do nothing, otherwise raise an error elif reduction != 'none': raise ValueError('avg_factor can not be used with reduction="sum"') return loss def varifocal_loss(pred, target, weight=None, alpha=0.75, gamma=2.0, iou_weighted=True, reduction='mean', avg_factor=None): """`Varifocal Loss `_ Args: pred (torch.Tensor): The prediction with shape (N, C), C is the number of classes target (torch.Tensor): The learning target of the iou-aware classification score with shape (N, C), C is the number of classes. weight (torch.Tensor, optional): The weight of loss for each prediction. Defaults to None. alpha (float, optional): A balance factor for the negative part of Varifocal Loss, which is different from the alpha of Focal Loss. Defaults to 0.75. gamma (float, optional): The gamma for calculating the modulating factor. Defaults to 2.0. iou_weighted (bool, optional): Whether to weight the loss of the positive example with the iou target. Defaults to True. reduction (str, optional): The method used to reduce the loss into a scalar. Defaults to 'mean'. Options are "none", "mean" and "sum". avg_factor (int, optional): Average factor that is used to average the loss. Defaults to None. """ # pred and target should be of the same size assert pred.size() == target.size() pred_sigmoid = pred.sigmoid() target = target.type_as(pred) if iou_weighted: focal_weight = target * (target > 0.0).float() + \ alpha * (pred_sigmoid - target).abs().pow(gamma) * \ (target 0.0).float() + \ alpha * (pred_sigmoid - target).abs().pow(gamma) * \ (target = 0.0 self.use_sigmoid = use_sigmoid self.alpha = alpha self.gamma = gamma self.iou_weighted = iou_weighted self.reduction = reduction self.loss_weight = loss_weight def forward(self, pred, target, weight=None, avg_factor=None, reduction_override=None): """Forward function. Args: pred (torch.Tensor): The prediction. target (torch.Tensor): The learning target of the prediction. weight (torch.Tensor, optional): The weight of loss for each prediction. Defaults to None. avg_factor (int, optional): Average factor that is used to average the loss. Defaults to None. reduction_override (str, optional): The reduction method used to override the original reduction method of the loss. Options are "none", "mean" and "sum". Returns: torch.Tensor: The calculated loss """ assert reduction_override in (None, 'none', 'mean', 'sum') reduction = ( reduction_override if reduction_override else self.reduction) if self.use_sigmoid: loss_cls = self.loss_weight * varifocal_loss( pred, target, weight, alpha=self.alpha, gamma=self.gamma, iou_weighted=self.iou_weighted, reduction=reduction, avg_factor=avg_factor) else: raise NotImplementedError return loss_cls三、使用方式

3.1 修改一

我们找到如下的文件'ultralytics/utils/loss.py'然后将上面的核心代码粘贴到文件的开头位置(注意是其他模块的导入之后!)粘贴后的样子如下图所示!

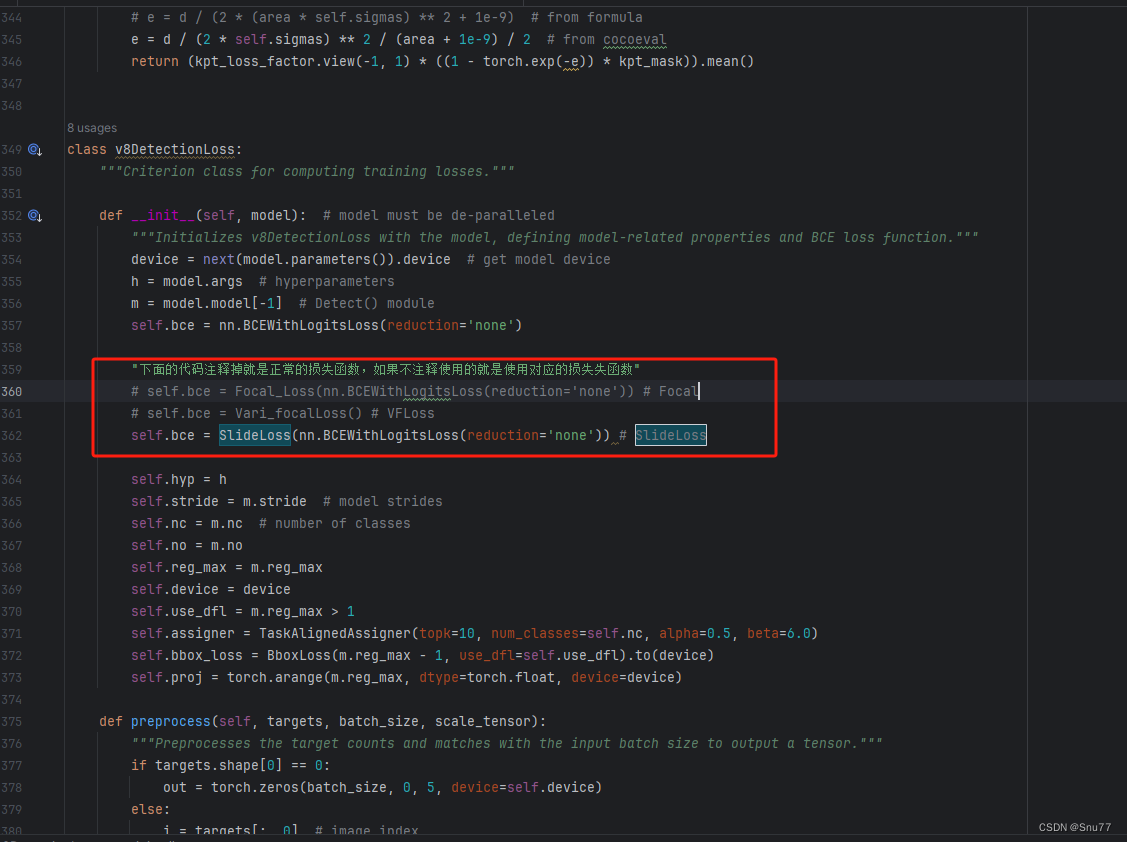

3.2 修改二

第二步我门中到函数class v8DetectionLoss:(没看错V10继承的v8损失函数我们修改v8就相当于修改了v10)!我们下下面的代码全部替换class v8DetectionLoss:的内容!

class v8DetectionLoss: """Criterion class for computing training losses.""" def __init__(self, model): # model must be de-paralleled """Initializes v8DetectionLoss with the model, defining model-related properties and BCE loss function.""" device = next(model.parameters()).device # get model device h = model.args # hyperparameters m = model.model[-1] # Detect() module self.bce = nn.BCEWithLogitsLoss(reduction="none") "下面的代码注释掉就是正常的损失函数,如果不注释使用的就是使用对应的损失失函数" # self.bce = Focal_Loss(nn.BCEWithLogitsLoss(reduction='none')) # Focal # self.bce = Vari_focalLoss() # VFLoss # self.bce = SlideLoss(nn.BCEWithLogitsLoss(reduction='none')) # SlideLoss # self.bce = QualityfocalLoss() # 目前仅支持者目标检测需要注意 分割 Pose 等用不了! self.hyp = h self.stride = m.stride # model strides self.nc = m.nc # number of classes self.no = m.nc + m.reg_max * 4 self.reg_max = m.reg_max self.device = device self.use_dfl = m.reg_max > 1 self.assigner = TaskAlignedAssigner(topk=10, num_classes=self.nc, alpha=0.5, beta=6.0) self.bbox_loss = BboxLoss(m.reg_max - 1, use_dfl=self.use_dfl).to(device) self.proj = torch.arange(m.reg_max, dtype=torch.float, device=device) def preprocess(self, targets, batch_size, scale_tensor): """Preprocesses the target counts and matches with the input batch size to output a tensor.""" if targets.shape[0] == 0: out = torch.zeros(batch_size, 0, 5, device=self.device) else: i = targets[:, 0] # image index _, counts = i.unique(return_counts=True) counts = counts.to(dtype=torch.int32) out = torch.zeros(batch_size, counts.max(), 5, device=self.device) for j in range(batch_size): matches = i == j n = matches.sum() if n: out[j, :n] = targets[matches, 1:] out[..., 1:5] = xywh2xyxy(out[..., 1:5].mul_(scale_tensor)) return out def bbox_decode(self, anchor_points, pred_dist): """Decode predicted object bounding box coordinates from anchor points and distribution.""" if self.use_dfl: b, a, c = pred_dist.shape # batch, anchors, channels pred_dist = pred_dist.view(b, a, 4, c // 4).softmax(3).matmul(self.proj.type(pred_dist.dtype)) # pred_dist = pred_dist.view(b, a, c // 4, 4).transpose(2,3).softmax(3).matmul(self.proj.type(pred_dist.dtype)) # pred_dist = (pred_dist.view(b, a, c // 4, 4).softmax(2) * self.proj.type(pred_dist.dtype).view(1, 1, -1, 1)).sum(2) return dist2bbox(pred_dist, anchor_points, xywh=False) def __call__(self, preds, batch): """Calculate the sum of the loss for box, cls and dfl multiplied by batch size.""" loss = torch.zeros(3, device=self.device) # box, cls, dfl feats = preds[1] if isinstance(preds, tuple) else preds pred_distri, pred_scores = torch.cat([xi.view(feats[0].shape[0], self.no, -1) for xi in feats], 2).split( (self.reg_max * 4, self.nc), 1 ) pred_scores = pred_scores.permute(0, 2, 1).contiguous() pred_distri = pred_distri.permute(0, 2, 1).contiguous() dtype = pred_scores.dtype batch_size = pred_scores.shape[0] imgsz = torch.tensor(feats[0].shape[2:], device=self.device, dtype=dtype) * self.stride[0] # image size (h,w) anchor_points, stride_tensor = make_anchors(feats, self.stride, 0.5) # Targets targets = torch.cat((batch["batch_idx"].view(-1, 1), batch["cls"].view(-1, 1), batch["bboxes"]), 1) targets = self.preprocess(targets.to(self.device), batch_size, scale_tensor=imgsz[[1, 0, 1, 0]]) gt_labels, gt_bboxes = targets.split((1, 4), 2) # cls, xyxy mask_gt = gt_bboxes.sum(2, keepdim=True).gt_(0) # pboxes pred_bboxes = self.bbox_decode(anchor_points, pred_distri) # xyxy, (b, h*w, 4) target_labels, target_bboxes, target_scores, fg_mask, _ = self.assigner( pred_scores.detach().sigmoid(), (pred_bboxes.detach() * stride_tensor).type(gt_bboxes.dtype), anchor_points * stride_tensor, gt_labels, gt_bboxes, mask_gt) target_scores_sum = max(target_scores.sum(), 1) # Cls loss # loss[1] = self.varifocal_loss(pred_scores, target_scores, target_labels) / target_scores_sum # VFL way if isinstance(self.bce, (nn.BCEWithLogitsLoss, Vari_focalLoss, Focal_Loss)): loss[1] = self.bce(pred_scores, target_scores.to(dtype)).sum() / target_scores_sum # BCE VFLoss Focal elif isinstance(self.bce, SlideLoss): if fg_mask.sum(): auto_iou = bbox_iou(pred_bboxes[fg_mask], target_bboxes[fg_mask], xywh=False, CIoU=True).mean() else: auto_iou = 0.1 loss[1] = self.bce(pred_scores, target_scores.to(dtype), auto_iou).sum() / target_scores_sum # SlideLoss elif isinstance(self.bce, QualityfocalLoss): if fg_mask.sum(): pos_ious = bbox_iou(pred_bboxes, target_bboxes / stride_tensor, xywh=False).clamp(min=1e-6).detach() # 10.0x Faster than torch.one_hot targets_onehot = torch.zeros((target_labels.shape[0], target_labels.shape[1], self.nc), dtype=torch.int64, device=target_labels.device) # (b, h*w, 80) targets_onehot.scatter_(2, target_labels.unsqueeze(-1), 1) cls_iou_targets = pos_ious * targets_onehot fg_scores_mask = fg_mask[:, :, None].repeat(1, 1, self.nc) # (b, h*w, 80) targets_onehot_pos = torch.where(fg_scores_mask > 0, targets_onehot, 0) cls_iou_targets = torch.where(fg_scores_mask > 0, cls_iou_targets, 0) else: cls_iou_targets = torch.zeros((target_labels.shape[0], target_labels.shape[1], self.nc), dtype=torch.int64, device=target_labels.device) # (b, h*w, 80) targets_onehot_pos = torch.zeros((target_labels.shape[0], target_labels.shape[1], self.nc), dtype=torch.int64, device=target_labels.device) # (b, h*w, 80) loss[1] = self.bce(pred_scores, cls_iou_targets.to(dtype), targets_onehot_pos.to(torch.bool)).sum() / max( fg_mask.sum(), 1) else: loss[1] = self.bce(pred_scores, target_scores.to(dtype)).sum() / target_scores_sum # 确保有损失可用 # Bbox loss if fg_mask.sum(): target_bboxes /= stride_tensor loss[0], loss[2] = self.bbox_loss(pred_distri, pred_bboxes, anchor_points, target_bboxes, target_scores, target_scores_sum, fg_mask, ((imgsz[0] ** 2 + imgsz[1] ** 2) / torch.square(stride_tensor)).repeat(1, batch_size).transpose( 1, 0)) loss[0] *= self.hyp.box # box gain loss[1] *= self.hyp.cls # cls gain loss[2] *= self.hyp.dfl # dfl gain return loss.sum() * batch_size, loss.detach() # loss(box, cls, dfl)3.3 使用方法

将上面的代码复制粘贴之后,我门找到下图所在的位置,使用方法就是那个取消注释就是使用的就是那个!

四 、本文总结

到此本文的正式分享内容就结束了,在这里给大家推荐我的YOLOv10改进有效涨点专栏,本专栏目前为新开的平均质量分98分,后期我会根据各种最新的前沿顶会进行论文复现,也会对一些老的改进机制进行补充,如果大家觉得本文帮助到你了,订阅本专栏,关注后续更多的更新~

专栏回顾:YOLOv10改进系列专栏——本专栏持续复习各种顶会内容——科研必备