Transformers x SwanLab:可视化NLP模型训练

HuggingFace 的 Transformers 是目前最流行的深度学习训框架之一(100k+ Star),现在主流的大语言模型(LLaMa系列、Qwen系列、ChatGLM系列等)、自然语言处理模型(Bert系列)等,都在使用Transformers来进行预训练、微调和推理。

SwanLab是一个深度学习实验管理与训练可视化工具,由西安电子科技大学团队打造,融合了Weights & Biases与Tensorboard的特点,能够方便地进行 训练可视化、多实验对比、超参数记录、大型实验管理和团队协作,并支持用网页链接的方式分享你的实验。

你可以使用Transformers快速进行模型训练,同时使用SwanLab进行实验跟踪与可视化。

下面将用一个Bert训练,来介绍如何将Transformers与SwanLab配合起来:

1. 代码中引入SwanLabCallback

from swanlab.integration.huggingface import SwanLabCallback

SwanLabCallback是适配于Transformers的日志记录类。

SwanLabCallback可以定义的参数有:

- project、experiment_name、description 等与 swanlab.init 效果一致的参数, 用于SwanLab项目的初始化。

- 你也可以在外部通过swanlab.init创建项目,集成会将实验记录到你在外部创建的项目中。

2. 传入Trainer

from swanlab.integration.huggingface import SwanLabCallback from transformers import Trainer, TrainingArguments ... # 实例化SwanLabCallback swanlab_callback = SwanLabCallback() trainer = Trainer( ... # 传入callbacks参数 callbacks=[swanlab_callback], )3. 案例-Bert训练

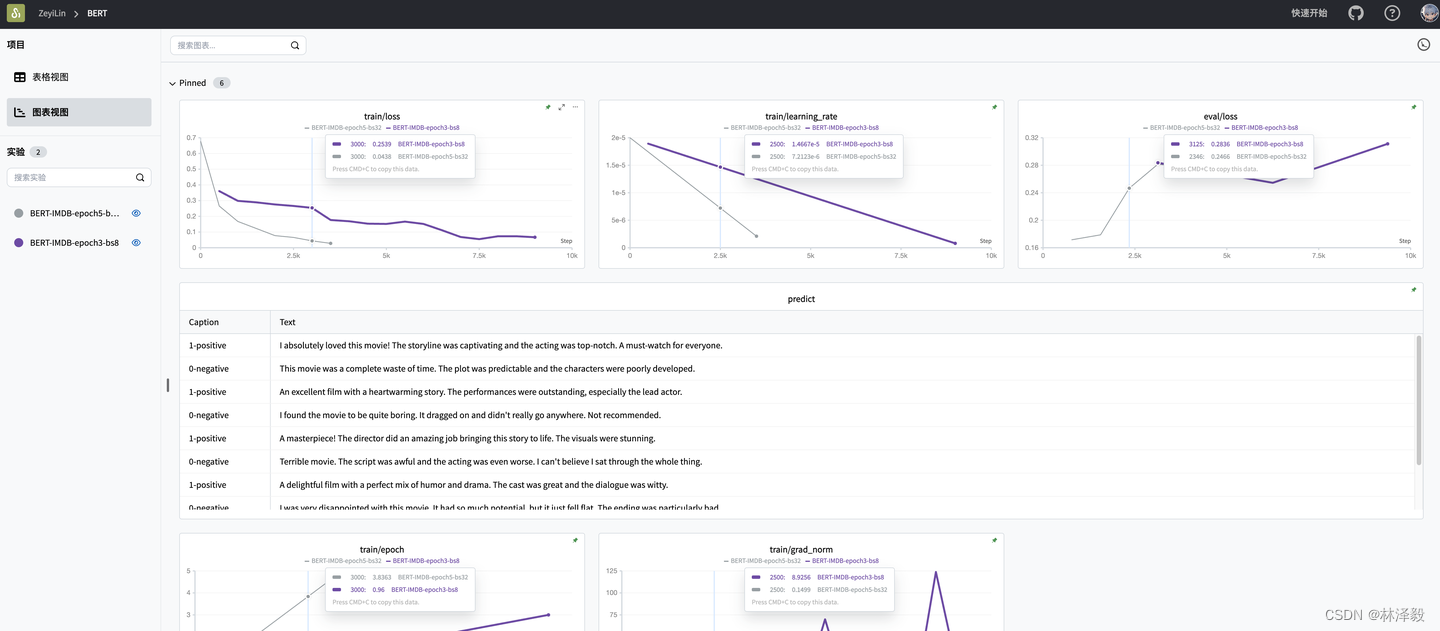

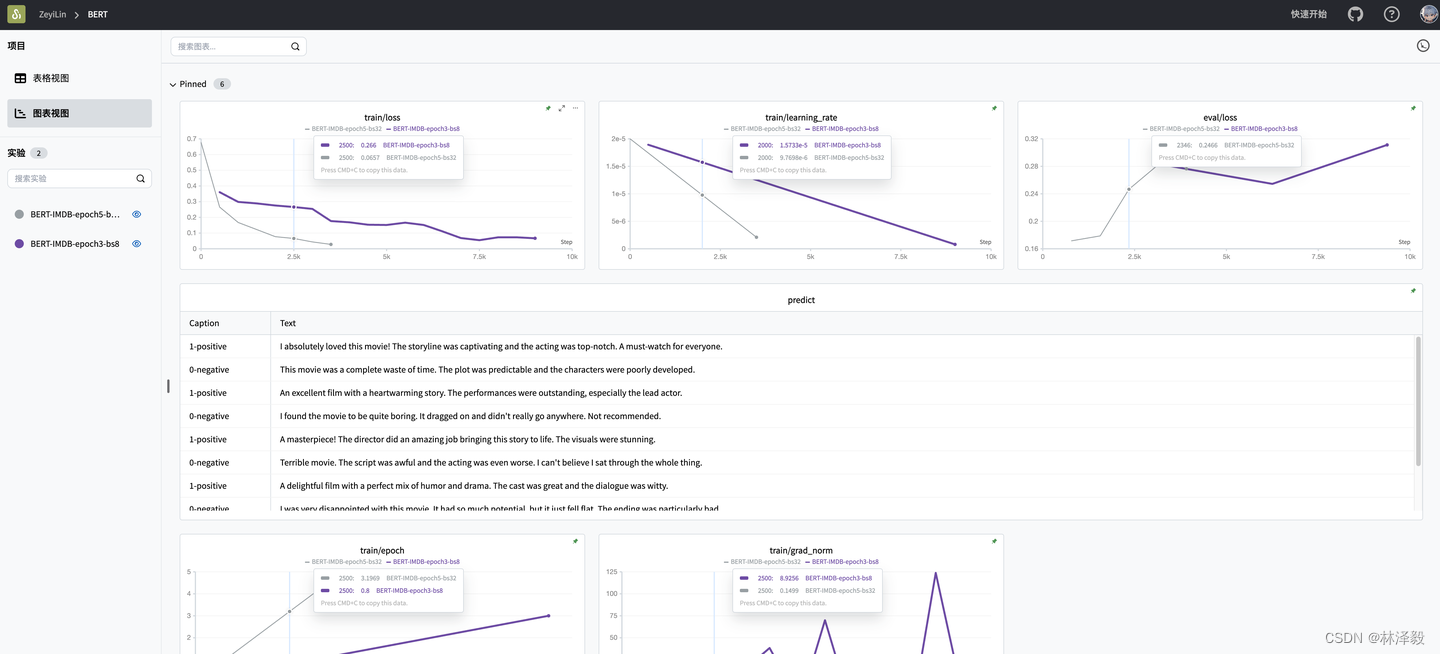

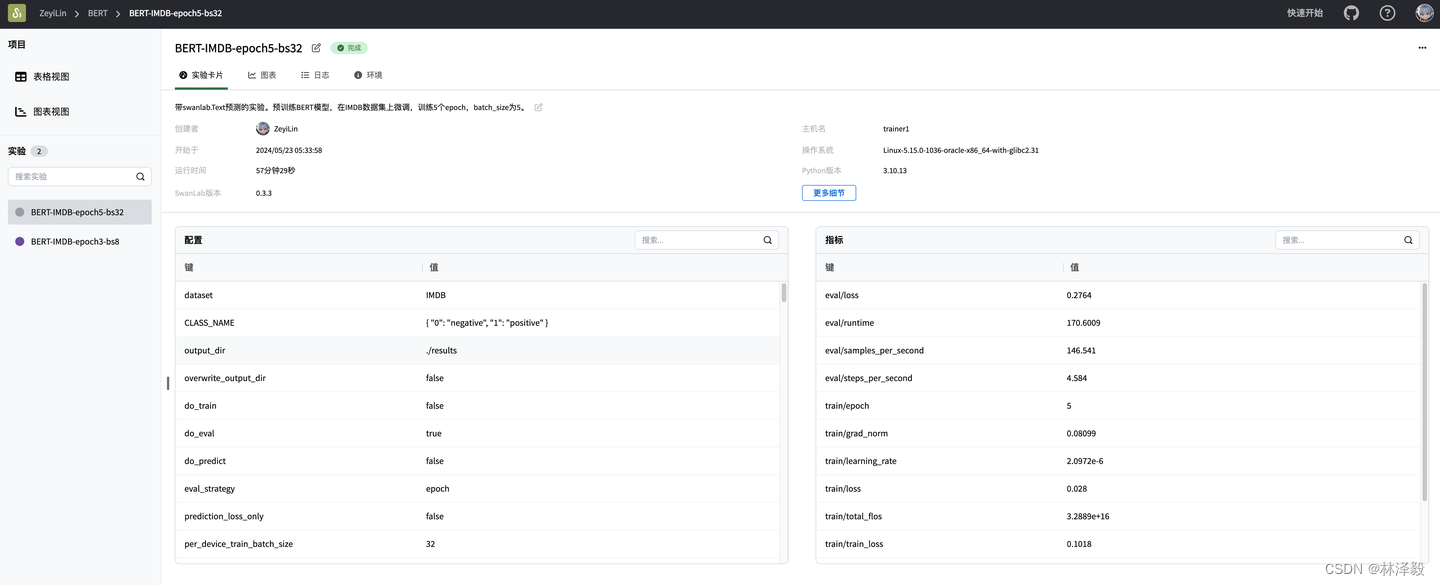

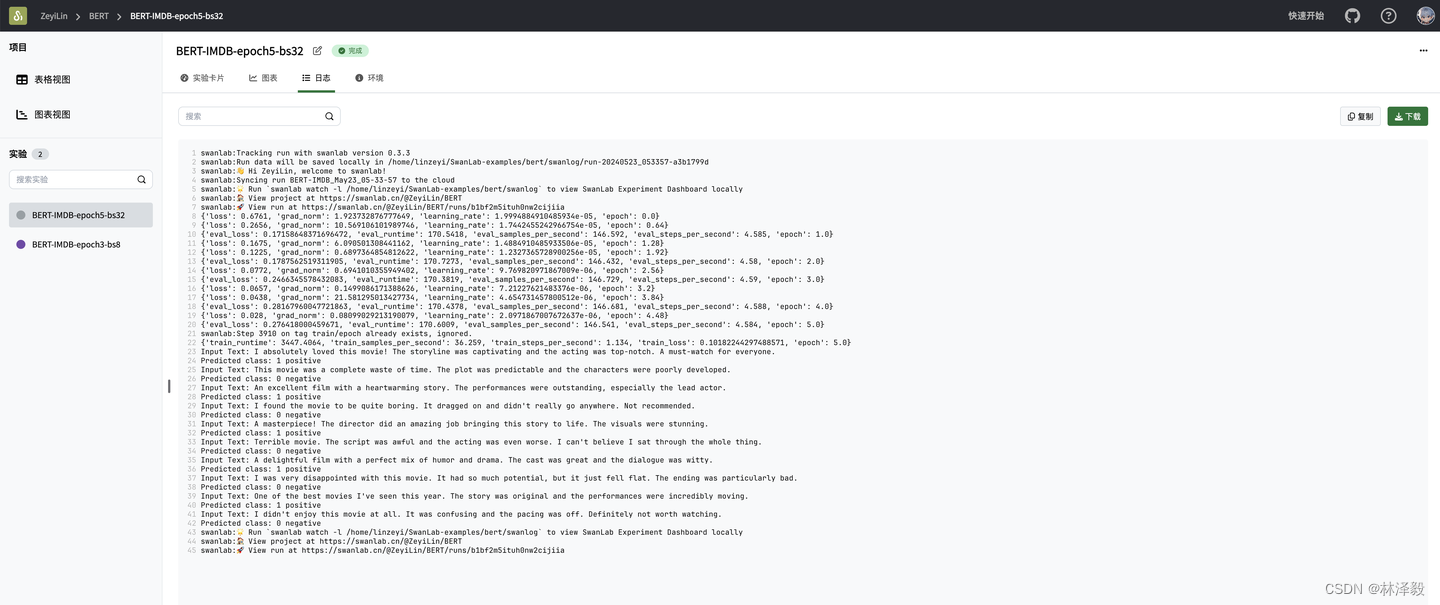

查看在线实验过程:BERT-SwanLab

下面是一个基于Transformers框架,使用BERT模型在imdb数据集上做微调,同时用SwanLab进行可视化的案例代码

""" 用预训练的Bert模型微调IMDB数据集,并使用SwanLabCallback回调函数将结果上传到SwanLab。 IMDB数据集的1是positive,0是negative。 """ import torch from datasets import load_dataset from transformers import AutoTokenizer, AutoModelForSequenceClassification, Trainer, TrainingArguments from swanlab.integration.huggingface import SwanLabCallback import swanlab def predict(text, model, tokenizer, CLASS_NAME): inputs = tokenizer(text, return_tensors="pt") with torch.no_grad(): outputs = model(**inputs) logits = outputs.logits predicted_class = torch.argmax(logits).item() print(f"Input Text: {text}") print(f"Predicted class: {int(predicted_class)} {CLASS_NAME[int(predicted_class)]}") return int(predicted_class) # 加载IMDB数据集 dataset = load_dataset('imdb') # 加载预训练的BERT tokenizer tokenizer = AutoTokenizer.from_pretrained('bert-base-uncased') # 定义tokenize函数 def tokenize(batch): return tokenizer(batch['text'], padding=True, truncation=True) # 对数据集进行tokenization tokenized_datasets = dataset.map(tokenize, batched=True) # 设置模型输入格式 tokenized_datasets = tokenized_datasets.rename_column("label", "labels") tokenized_datasets.set_format('torch', columns=['input_ids', 'attention_mask', 'labels']) # 加载预训练的BERT模型 model = AutoModelForSequenceClassification.from_pretrained('bert-base-uncased', num_labels=2) # 设置训练参数 training_args = TrainingArguments( output_dir='./results', eval_strategy='epoch', save_strategy='epoch', learning_rate=2e-5, per_device_train_batch_size=8, per_device_eval_batch_size=8, logging_first_step=100, # 总的训练轮数 num_train_epochs=3, weight_decay=0.01, report_to="none", # 单卡训练 ) CLASS_NAME = {0: "negative", 1: "positive"} # 设置swanlab回调函数 swanlab_callback = SwanLabCallback(project='BERT', experiment_name='BERT-IMDB', config={'dataset': 'IMDB', "CLASS_NAME": CLASS_NAME}) # 定义Trainer trainer = Trainer( model=model, args=training_args, train_dataset=tokenized_datasets['train'], eval_dataset=tokenized_datasets['test'], callbacks=[swanlab_callback], ) # 训练模型 trainer.train() # 保存模型 model.save_pretrained('./sentiment_model') tokenizer.save_pretrained('./sentiment_model') # 测试模型 test_reviews = [ "I absolutely loved this movie! The storyline was captivating and the acting was top-notch. A must-watch for everyone.", "This movie was a complete waste of time. The plot was predictable and the characters were poorly developed.", "An excellent film with a heartwarming story. The performances were outstanding, especially the lead actor.", "I found the movie to be quite boring. It dragged on and didn't really go anywhere. Not recommended.", "A masterpiece! The director did an amazing job bringing this story to life. The visuals were stunning.", "Terrible movie. The script was awful and the acting was even worse. I can't believe I sat through the whole thing.", "A delightful film with a perfect mix of humor and drama. The cast was great and the dialogue was witty.", "I was very disappointed with this movie. It had so much potential, but it just fell flat. The ending was particularly bad.", "One of the best movies I've seen this year. The story was original and the performances were incredibly moving.", "I didn't enjoy this movie at all. It was confusing and the pacing was off. Definitely not worth watching." ] model.to('cpu') text_list = [] for review in test_reviews: label = predict(review, model, tokenizer, CLASS_NAME) text_list.append(swanlab.Text(review, caption=f"{label}-{CLASS_NAME[label]}")) if text_list: swanlab.log({"predict": text_list}) swanlab.finish()4. 相关链接

- Transformers文档:🤗 Transformers

- SwanLab官网:SwanLab - 在线AI实验平台,一站式跟踪、比较、分享你的模型

- SwanLab官方文档:SwanLab官方文档 | 先进的AI团队协作与模型创新引擎

免责声明:我们致力于保护作者版权,注重分享,被刊用文章因无法核实真实出处,未能及时与作者取得联系,或有版权异议的,请联系管理员,我们会立即处理! 部分文章是来自自研大数据AI进行生成,内容摘自(百度百科,百度知道,头条百科,中国民法典,刑法,牛津词典,新华词典,汉语词典,国家院校,科普平台)等数据,内容仅供学习参考,不准确地方联系删除处理! 图片声明:本站部分配图来自人工智能系统AI生成,觅知网授权图片,PxHere摄影无版权图库和百度,360,搜狗等多加搜索引擎自动关键词搜索配图,如有侵权的图片,请第一时间联系我们,邮箱:ciyunidc@ciyunshuju.com。本站只作为美观性配图使用,无任何非法侵犯第三方意图,一切解释权归图片著作权方,本站不承担任何责任。如有恶意碰瓷者,必当奉陪到底严惩不贷!