吴恩达AIGC《How Diffusion Models Work》笔记

温馨提示:这篇文章已超过391天没有更新,请注意相关的内容是否还可用!

1. Introduction

Midjourney,Stable Diffusion,DALL-E等产品能够仅通过Prompt就能够生成图像。本课程将介绍这些应用背后算法的原理。

课程地址:https://learn.deeplearning.ai/diffusion-models/

2. Intuition

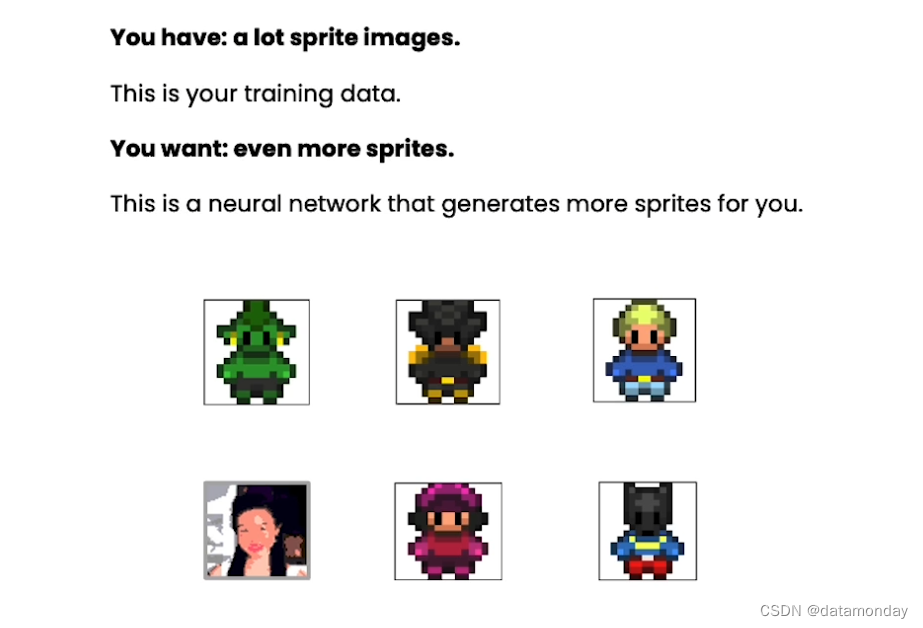

本小节将介绍扩散模型的基础知识,探讨扩散模型的目标,如何利用各种游戏角色图片训练数据来增强模型的能力。

假设下面是你的数据集,你想要更多的在这些数据集中没有的角色图片,如何做到?可以使用扩散模型生成这样的角色图片。

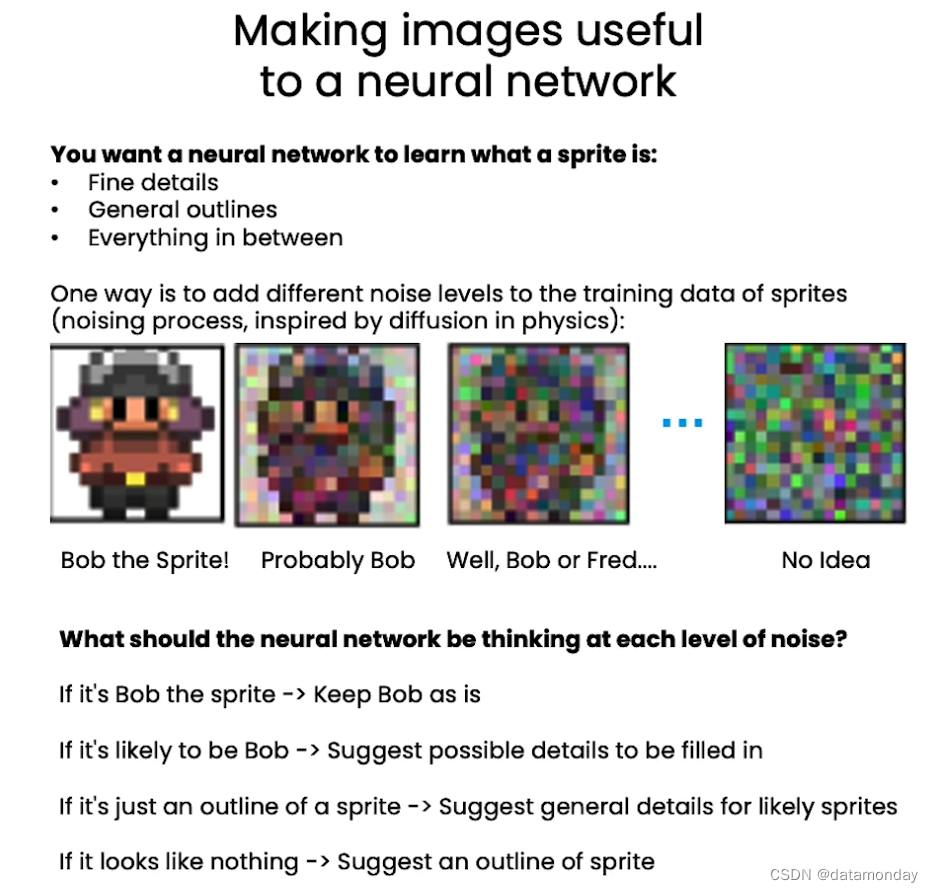

扩散模型应该是这样的一个神经网络:它能够学习到游戏角色的一般概念,例如游戏角色是什么,游戏角色的身体轮廓等等,甚至是更细微的细节,例如游戏角色的头发颜色或者腰带扣。

有一种方法可以做到这一点,就是在训练数据上添加不同程度的噪声,这被称为噪声过程(noising process),这是时受到物理学的启发而取的名字。你可以想象一滴墨水滴入一杯水中,随着时间的推移,它会在水中扩散,直至消失。当你逐渐给图像添加更多的噪声时,图片的轮廓从清晰变模糊,直到完全无法辨别。这个逐渐添加不同程度噪声的过程很像墨水在水中逐渐消失的过程。

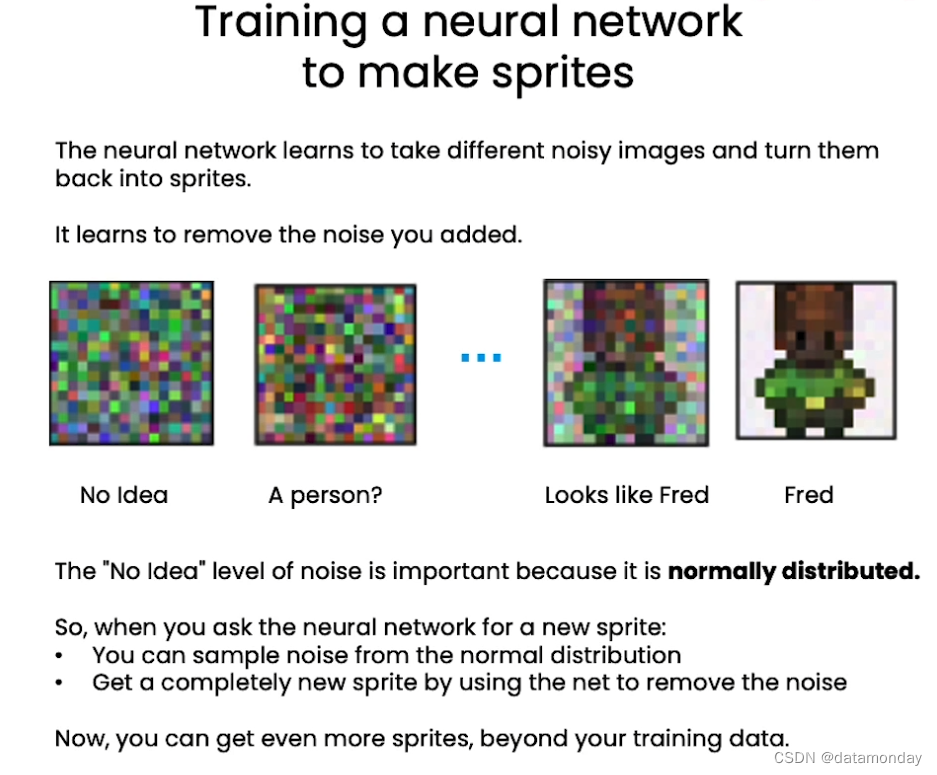

训练模型的目标是:模型会移除你添加的噪声,将添加了不同噪声的图像变换为游戏角色。

3. Sampling

采样是神经网络训练完成之后,在推理时做的事。

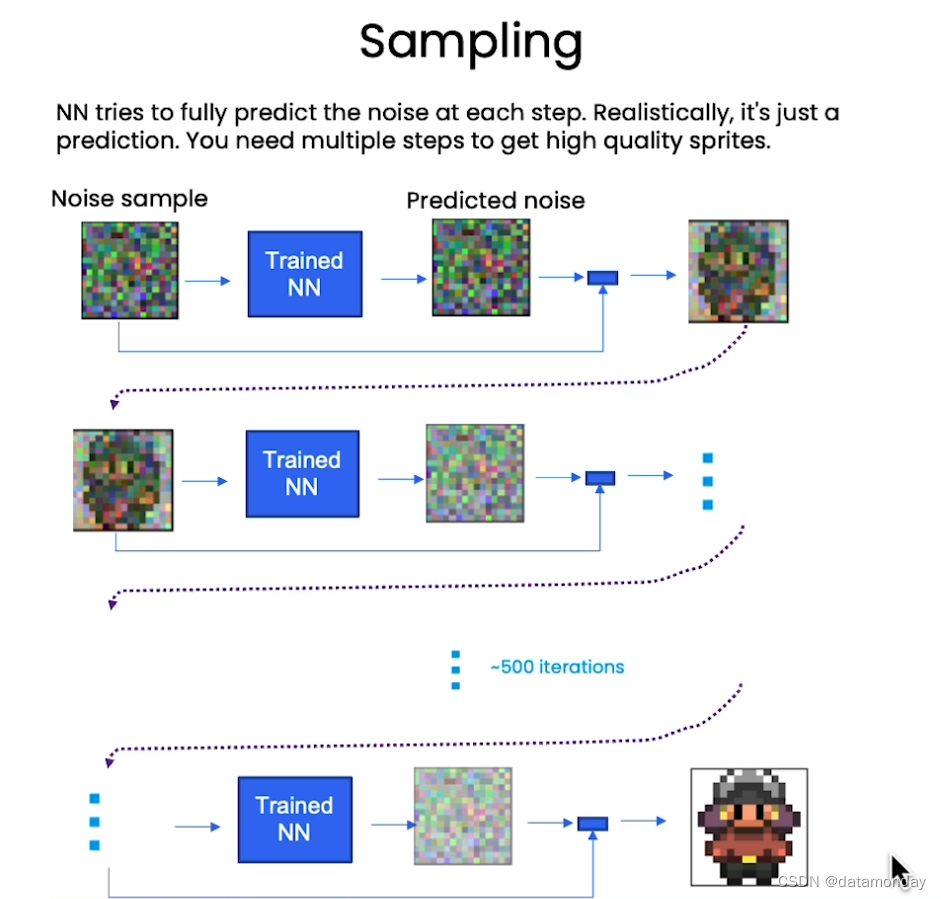

假设有一个已经添加了噪声的样本,将其输入到已经训练好的神经网络中,这个神经网络已经理解了游戏角色图片。之后,让神经网络预测噪声,然后,从噪声样本中减去预测的噪声,得到的结果就更接近游戏角色图片。

现实情况是,这只是对噪声的预测,并没有完全消除所有的噪声,需要迭代很多次在能够得到接近原始图片的高质量的样本。

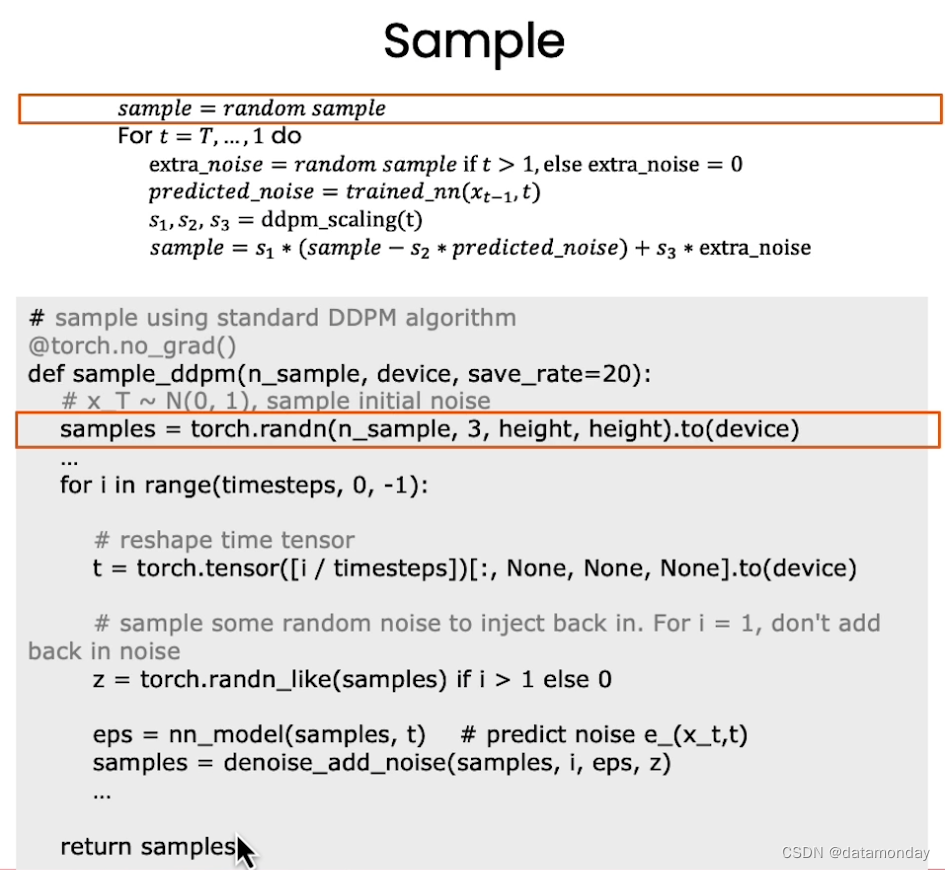

下面是采样算法的实现:

首先可以采样一个随机噪声样本,这就是一开始的原始噪声,然后逆序从最后一次迭代的完全噪声的状态遍历到第一次迭代。就像时光倒流,也可以想象是墨水的例子,一开始完全扩散的,然后一直倒退回到刚刚滴入水中的状态。

之后,采样一些特殊的噪声。

之后,将原始的噪声再次输入到神经网络,然后得到一些预测的噪声。这个预测的噪声就是训练过的神经网络想从原始噪声中减去的噪声,从而得到更像原始的游戏角色的图片。

最后,通过降噪扩散概率模型(Denoising Diffusion Probabilistic Models,DDPM)得到从原始噪声中减去预测的噪声,再加上一些额外的噪声。

from typing import Dict, Tuple

from tqdm import tqdm

import torch

import torch.nn as nn

import torch.nn.functional as F

from torch.utils.data import DataLoader

from torchvision import models, transforms

from torchvision.utils import save_image, make_grid

import matplotlib.pyplot as plt

from matplotlib.animation import FuncAnimation, PillowWriter

import numpy as np

from IPython.display import HTML

from diffusion_utilities import *

class ContextUnet(nn.Module):

def __init__(self, in_channels, n_feat=256, n_cfeat=10, height=28): # cfeat - context features

super(ContextUnet, self).__init__()

# number of input channels, number of intermediate feature maps and number of classes

self.in_channels = in_channels

self.n_feat = n_feat

self.n_cfeat = n_cfeat

self.h = height #assume h == w. must be divisible by 4, so 28,24,20,16...

# Initialize the initial convolutional layer

self.init_conv = ResidualConvBlock(in_channels, n_feat, is_res=True)

# Initialize the down-sampling path of the U-Net with two levels

self.down1 = UnetDown(n_feat, n_feat) # down1 #[10, 256, 8, 8]

self.down2 = UnetDown(n_feat, 2 * n_feat) # down2 #[10, 256, 4, 4]

# original: self.to_vec = nn.Sequential(nn.AvgPool2d(7), nn.GELU())

self.to_vec = nn.Sequential(nn.AvgPool2d((4)), nn.GELU())

# Embed the timestep and context labels with a one-layer fully connected neural network

self.timeembed1 = EmbedFC(1, 2*n_feat)

self.timeembed2 = EmbedFC(1, 1*n_feat)

self.contextembed1 = EmbedFC(n_cfeat, 2*n_feat)

self.contextembed2 = EmbedFC(n_cfeat, 1*n_feat)

# Initialize the up-sampling path of the U-Net with three levels

self.up0 = nn.Sequential(

nn.ConvTranspose2d(2 * n_feat, 2 * n_feat, self.h//4, self.h//4), # up-sample

nn.GroupNorm(8, 2 * n_feat), # normalize

nn.ReLU(),

)

self.up1 = UnetUp(4 * n_feat, n_feat)

self.up2 = UnetUp(2 * n_feat, n_feat)

# Initialize the final convolutional layers to map to the same number of channels as the input image

self.out = nn.Sequential(

nn.Conv2d(2 * n_feat, n_feat, 3, 1, 1), # reduce number of feature maps #in_channels, out_channels, kernel_size, stride=1, padding=0

nn.GroupNorm(8, n_feat), # normalize

nn.ReLU(),

nn.Conv2d(n_feat, self.in_channels, 3, 1, 1), # map to same number of channels as input

)

def forward(self, x, t, c=None):

"""

x : (batch, n_feat, h, w) : input image

t : (batch, n_cfeat) : time step

c : (batch, n_classes) : context label

"""

# x is the input image, c is the context label, t is the timestep, context_mask says which samples to block the context on

# pass the input image through the initial convolutional layer

x = self.init_conv(x)

# pass the result through the down-sampling path

down1 = self.down1(x) #[10, 256, 8, 8]

down2 = self.down2(down1) #[10, 256, 4, 4]

# convert the feature maps to a vector and apply an activation

hiddenvec = self.to_vec(down2)

# mask out context if context_mask == 1

if c is None:

c = torch.zeros(x.shape[0], self.n_cfeat).to(x)

# embed context and timestep

cemb1 = self.contextembed1(c).view(-1, self.n_feat * 2, 1, 1) # (batch, 2*n_feat, 1,1)

temb1 = self.timeembed1(t).view(-1, self.n_feat * 2, 1, 1)

cemb2 = self.contextembed2(c).view(-1, self.n_feat, 1, 1)

temb2 = self.timeembed2(t).view(-1, self.n_feat, 1, 1)

#print(f"uunet forward: cemb1 {cemb1.shape}. temb1 {temb1.shape}, cemb2 {cemb2.shape}. temb2 {temb2.shape}")

up1 = self.up0(hiddenvec)

up2 = self.up1(cemb1*up1 + temb1, down2) # add and multiply embeddings

up3 = self.up2(cemb2*up2 + temb2, down1)

out = self.out(torch.cat((up3, x), 1))

return out

# hyperparameters

# diffusion hyperparameters

timesteps = 500

beta1 = 1e-4

beta2 = 0.02

# network hyperparameters

device = torch.device("cuda:0" if torch.cuda.is_available() else torch.device('cpu'))

n_feat = 64 # 64 hidden dimension feature

n_cfeat = 5 # context vector is of size 5

height = 16 # 16x16 image

save_dir = './weights/'

# construct DDPM noise schedule

b_t = (beta2 - beta1) * torch.linspace(0, 1, timesteps + 1, device=device) + beta1

a_t = 1 - b_t

ab_t = torch.cumsum(a_t.log(), dim=0).exp()

ab_t[0] = 1

# construct model

nn_model = ContextUnet(in_channels=3, n_feat=n_feat, n_cfeat=n_cfeat, height=height).to(device)

超参数介绍:

- beta1:DDPM算法的超参数;

- beta2:DDPM算法的超参数;

- height:图片的长度和高度;

- noise schedule(噪声调度):确定在某个时间步长应用于图像的噪声级别;

- S1,S2,S3:缩放因子的值

# helper function; removes the predicted noise (but adds some noise back in to avoid collapse) def denoise_add_noise(x, t, pred_noise, z=None): if z is None: z = torch.randn_like(x) noise = b_t.sqrt()[t] * z mean = (x - pred_noise * ((1 - a_t[t]) / (1 - ab_t[t]).sqrt())) / a_t[t].sqrt() return mean + noise # load in model weights and set to eval mode nn_model.load_state_dict(torch.load(f"{save_dir}/model_trained.pth", map_location=device)) nn_model.eval() print("Loaded in Model") # sample using standard algorithm @torch.no_grad() def sample_ddpm(n_sample, save_rate=20): # x_T ~ N(0, 1), sample initial noise samples = torch.randn(n_sample, 3, height, height).to(device) # array to keep track of generated steps for plotting intermediate = [] for i in range(timesteps, 0, -1): print(f'sampling timestep {i:3d}', end='\r') # reshape time tensor t = torch.tensor([i / timesteps])[:, None, None, None].to(device) # sample some random noise to inject back in. For i = 1, don't add back in noise # 这里设置为0,会导致模型坍缩 z = torch.randn_like(samples) if i > 1 else 0 eps = nn_model(samples, t) # predict noise e_(x_t,t) samples = denoise_add_noise(samples, i, eps, z) if i % save_rate ==0 or i==timesteps or i